I’ve been spending a lot of time lately thinking about the “context window problem.” We’ve all been there: you have a massive 200-page document or a complex coding feature to implement, and you’re forced to choose between a slow, memory-hungry long-context prompt or an expensive, multi-hour fine-tuning session. It’s a frustrating trade-off.

But last week, I dug into some fascinating new research from Sakana AI that basically says: “Why not both?”

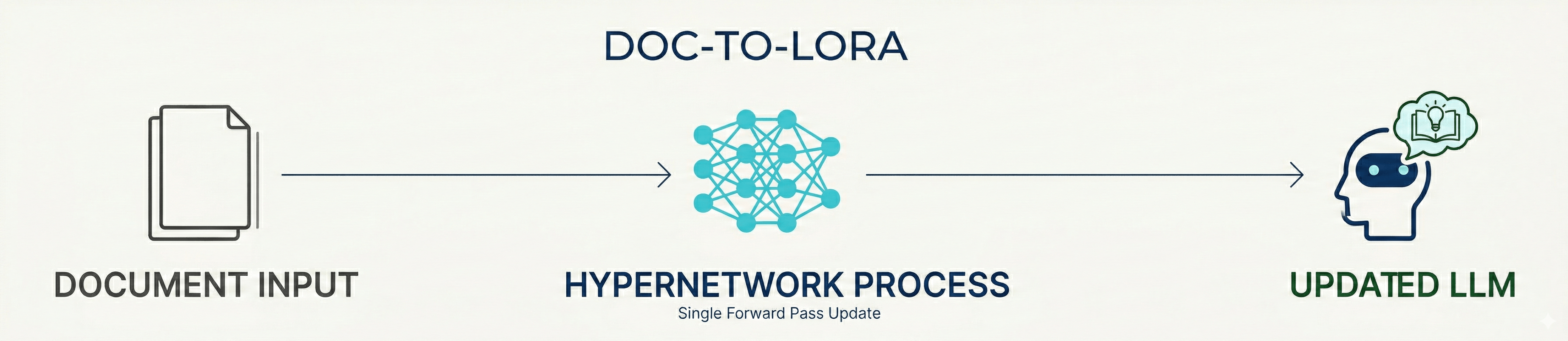

They’ve introduced two new frameworks; Doc-to-LoRA (D2L) and Text-to-LoRA (T2L); that use something called hypernetworks to instantly internalize information. We’re talking about moving from “reading” a document to “knowing” it in less than a second.

The Problem with Standard Approaches

Passing long documents into an LLM prompt is the path of least resistance, but it hits three distinct walls at scale:

High Latency: Attention cost scales quadratically with context length. Every token you add increases time-to-first-byte geometrically; a 200-page PDF that takes 2 seconds at 10K tokens takes over 80 seconds at 200K.

Memory Spikes: A 128K-token document consumes roughly 12GB of VRAM just for the KV cache. That’s before you’ve run a single inference. Concurrent users per GPU collapses fast.

RAG Blindspots: Chunking breaks holistic understanding. RAG will find local facts but miss cross-document synthesis; the kind of reasoning that requires holding two distant paragraphs in mind simultaneously. The retriever returns the right chunks, but no single chunk contains the cross-regional signal you actually needed.

Under the Hood: Amortized Adaptation

If you’re like me and want to know what’s actually happening in the code, the key word is amortization.

Traditionally, if you wanted to “distill” a document into a model’s parameters, you’d use Context Distillation (CD). You’d train a student model to mimic a teacher model that has the full context. The problem? It takes 40 to 100 seconds per document. Sakana AI bypasses this by paying a “one-time fee” during a meta-training phase to train a hypernetwork.

The Hypernetwork Architecture

The hypernetwork ($H_\phi$) isn’t just a simple MLP. It uses a Perceiver-based backbone.

- Input: It takes the internal token activations from a frozen base LLM (like Gemma) as it “reads” the document.

- Processing: The Perceiver architecture allows it to handle variable-length inputs and map them into fixed-shape representations.

- Output: It predicts the $A$ and $B$ matrices for a Low-Rank Adaptation (LoRA) module.

Mathematically, the goal is to minimize the KL divergence between the teacher (with context $c$) and the student (with generated weights $\Delta W_c$):

\[\min_{\phi} \mathbb{E}_{c} [KL[p_\theta(y | x, c) \parallel p_{\theta+H_\phi(c)}(y | x)]]\]By training on thousands of diverse contexts, the hypernetwork learns the “rule” for how weights should change to represent new info.

The Chunking Mechanism

How do you handle a document that’s 4x longer than the model’s native window? D2L uses a chunking mechanism. It breaks the document into 1,024-token pieces, generates a rank-8 LoRA for each, and then concatenates them. This allows the “parametric memory” to scale alongside the document length while maintaining near-perfect accuracy on “Needle-in-a-Haystack” tests.

The result is a dramatically different resource profile compared to the alternatives:

| Approach | VRAM (128K doc) | Update Latency | Cross-doc Synthesis |

|---|---|---|---|

| Long Context (KV cache) | ~12 GB | ; | ✓ |

| Traditional Context Distillation | low | 40–100s | ✓ |

| Doc-to-LoRA | <50 MB | <1s | ✓ |

Real-World Example: MegaMart’s Weekly Brief

I always find these things easier to visualize with a domain example.

“MegaMart” HQ sends a 200-page weekly PDF to 500 store managers every Monday. It contains complex, interconnected data: regional supply chain disruptions, dynamic pricing models, localized competitor analysis, and fresh produce shelf-life projections.

The old way (RAG or long-context prompting): Managers query an AI using RAG. Because the data is chunked, the AI misses that an avocado shortage in Region A means pushing Guacamole kits in Region B; the cross-regional signal spans two sections of the document that never land in the same retrieval chunk. The alternative, passing the full 200 pages into the prompt, takes 15 seconds to load and costs $1.50 per question. With 500 managers asking dozens of questions daily, that’s tens of thousands of dollars a week in inference cost alone.

The Doc-to-LoRA way: HQ passes the PDF through the Hypernetwork once. It generates a “Week 42 Strategy LoRA”; a compact adapter under 50MB. Every store manager loads this adapter. They now query the AI with zero input context tokens. The model inherently knows the entire document, synthesizes cross-regional strategies correctly (Region A avocado shortage → push Guacamole kits in Region B), and answers for fractions of a cent per query. The adapter is shared once; the context cost is never paid again.

Why This Matters

The efficiency gains here are honestly staggering:

- VRAM: For a 128K-token doc, a standard model needs 12GB of VRAM for the KV cache. Doc-to-LoRA needs less than 50MB.

- Latency: Update times drop from ~100 seconds to <1 second.

- Accuracy: 98.5% Needle-in-a-Haystack retrieval success at 4x the model’s native context limit.

We’re moving toward a world where LLMs aren’t static blocks of weights. With hypernetworks, we can have “living” models that adapt to new information or specialized tasks as fast as we can describe them.

While there are still challenges; like the “accuracy gap” compared to slow, traditional distillation or potential interference between multiple adapters; this is a massive leap for on-device intelligence and privacy-first personalization.

I’m excited to see where this goes. If you want to dive into the code yourself, Sakana has released the weights and the implementation on GitHub. It’s definitely worth a weekend project.

References: