- Context windows are getting bigger.

- Reasoning is getting worse.

- And somewhere, a Transformer is crying softly.

Context Rot

We thought million-token prompts would make models smarter. Instead, they made them confidently wrong at industrial scale.

The landscape of large language models is hitting a fundamental wall: Context Rot. Even as context windows expand into the millions, the quality of reasoning degrades steeply as prompts grow longer. We are moving past the era of “neural attention only” and into the era of Inference-Time Scaling.

The combination of the Recursive Language Model (RLM) paradigm and Google’s Agent Development Kit (ADK) represents the most significant architectural shift of 2026. This isn’t just about “more tokens”: it’s about treating the prompt as a programmatically accessible environment rather than a static input string.

Prompts as Environments

Traditional LLMs ingest tokens directly into their neural network. RLMs invert this. An RLM treats a massive user prompt as an external environment accessed via a Read-Eval-Print Loop (REPL). Instead of feeding tokens into a Transformer, the RLM initializes a persistent programming environment where the prompt is stored as a variable. The model then writes Python code to “peek” into specific slices of this data.

LLMs: “Here’s 800,000 tokens. Good luck, soldier.”

RLMs: “No ❤️”

Google ADK and the Recursive Loop

The RLM loop works in four stages:

- Metadata Initialization: The root model (

depth=0) receives only constant-size metadata (length, prefix) about the prompt. - Symbolic Decomposition: The agent writes code to partition the context into manageable chunks (e.g., splitting a book by chapters).

- Recursive Invocation: The agent calls

rlm_agent(query, context), spawning child agents (depth=1+) to process those chunks. - Aggregation: The root model collects results from sub-agents and builds the final answer in the REPL environment.

While the original MIT paper proved the theoretical scaling of RLMs to 10M+ tokens, Google’s Agent Development Kit (ADK) provides the infrastructure required for industrial application.

Beyond the MIT Implementation

The ADK implementation introduces several critical technical extensions:

- Massive Parallelism: The original RLM sub-agents were run sequentially to avoid quota limits. ADK enables concurrent task execution with configurable limits, drastically reducing latency for information-dense tasks.

- Connected Data Systems: While research RLMs viewed the prompt as a simple string, ADK uses

Pathobjects. This allows the agent to invoke methods on data residing in Google Cloud Storage (GCS) buckets or local filesystems without ever loading the full content into the model’s primary window. - Real-Time UI Visualization: Long-running recursive tasks can be opaque. ADK streams events in real-time, allowing developers to visualize the recursion tree and validate the agent’s decomposition strategy as it happens.

Context Was Never the Problem!

We thought the bottleneck was: “Not enough tokens.” It wasn’t.

The real bottleneck was: reasoning over too much stuff at once.

RLMs + ADK flip the script:

- Context lives outside the model

- Reasoning happens symbolically

- Scale comes from structure, not brute force

Engineering Challenges: Alignment and Cost

Recursion is powerful, but it can spread small mistakes and hidden spend across the system.

- Cost Variance: RLM inference remains comparable to base LM calls on average, but tail-end costs can spike if an agent enters a complex decomposition loop.

- Stopping Conditions: A critical missing layer is structural stop conditions: knowing when more comparison no longer yields more correctness.

Symbolic Recursion vs. Neural Attention

- Neural attention: I will softly weight everything and hope for the best.

- Symbolic recursion: I will write a loop. And then another loop. And then a nested loop.

The technical “secret sauce” of RLMs is Symbolic Recursion. In traditional scaffolds, agents verbalize sub-calls (autoregressive delegation), which limits output length and precision. RLMs generate sub-calls programmatically.

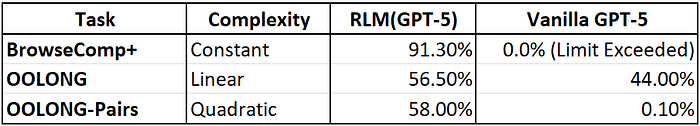

An RLM can write a loop that launches parallel agents to analyze pairs of chunks: handling tasks like OOLONG-Pairs, which requires quadratic processing complexity, a task where even vanilla GPT-5 fails catastrophically.

Performance Benchmarks

Vanilla models choke. RLMs breathe.

Why the RLM is More Than Just a “grep” Sub-agent

The fundamental shift of RLMs lies in treating the sub-agent as a native function within a stateful REPL environment rather than a disconnected tool. While traditional coding agents use an external controller to orchestrate independent tools like grep, the RLM defines a unified LM ↔ REPL + Prompt interface.

In this paradigm, the massive user prompt is not a static token stream to be compacted into a narrow window: it’s a programmatically accessible variable within the REPL. This integration allows the model to programmatically examine and decompose contexts, launching recursive sub-agents as internal algorithmic steps. This moves AI toward a unified neurosymbolic execution where symbolic code manages precise data retrieval while neural logic handles fuzzy reasoning, effectively decoupling the reasoning process from the physical constraints of any single neural LM call.

By defining sub-agents as functions inside the REPL, the RLM operates as a dynamic corporate hierarchy of stateless LLMs. Instead of a single “CEO” model attempting to ingest 10,000 pages at once: leading to the inevitable “context rot”: the system scripts a custom organization on the fly to handle specific data slices. This allows the system to scale to enormous contexts, up to two orders of magnitude beyond the model’s native limit, as the neural logic only ever interacts with the symbolic handles managed by the REPL. A singular, fixed-context-window LM can solve arbitrarily large problems by recursively calling itself within a controlled loop: creating a task-agnostic framework capable of navigating massive datasets with programmatic precision.